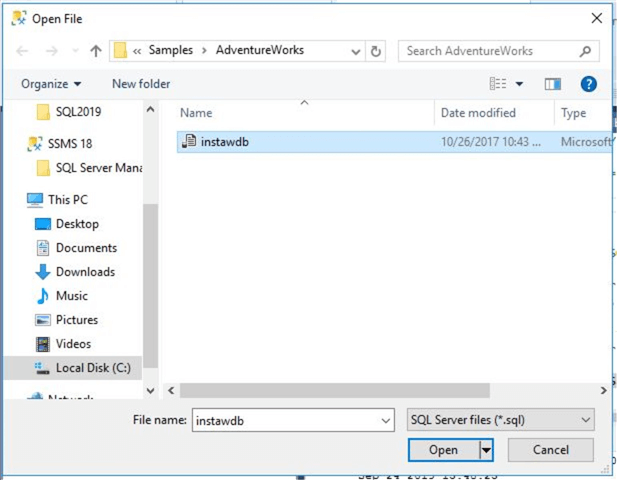

To force load option, set force parameter to true. For example snowflake remembers the files which are loaded, when you try to reload the same file, load doesn’t happen as the same is loaded within certain point of time. There are multiple options to copy into object within Snowflake. Use this command to generate copy commands for all tables created on snowflake select 'copy into ' || Table_Schema || '.'|| Table_Name || ' from pattern=\'' || Table_Schema || '.' || Table_Name || '.csv\' file_format=my_csv_format force=true ' as Load_Script from information_schema.tables Finally load data from Azure blob storage into Snowflake Tables // 1.To create a azure stage on Snowflake create or replace stage my_azure_stage url= 'azure://.net/csvfiles' credentials=(azure_sas_token= '?sv=&ss=b&srt=sco&sp=rwdlac&se=&st=&spr=https&sig=JGclySQxmrAkw3YbCtD%2Bt4vbkys3B3nh8RScTOsCiGM%3D') // 2.To create a file formation definition create or replace file format my_csv_format type = csv field_delimiter = '|' skip_header = 1 null_if = ( 'NULL', 'null') empty_field_as_null = true compression = GZIP FIELD_OPTIONALLY_ENCLOSED_BY = '"' // 3.Copy data into Snowflakecopy into SALESLT.ADDRESS from pattern='' file_format=my_csv_format force=true //4. Step3: Create an external stage on Snowflake database to point Azure blob storage container, and create file format definition to read. One of the important factor to know about Snowflake that it support only of collation type of case sensitive. Best is to study and understand them from Snowflake. There are slight deviations on data type names between SQL Server and Snowflake such as bit-boolean, datetimeoffset,timestamp_tz, etc. Snowflake data types could be found from here. Pay attention during this exercise to check for any issue. Script copies default constraint from SQL server which may not work on Snowflake. There may be few issues during table creation due to default constraints. Get required table creation scripts from GitHub to execute on Snowflake. Also to identify Primary and Foreign Key relations and apply them into Snowflake objects. To achieve this, we use information_schema from SQL server to Generate table scripts based on Snowflake data type (Scripts from Github). Bulk copy data tutorial from Microsoft helped me to build this pipeline.Īll other blob storage file creation parameters such as Encoding, Null treatment, delimiter changes, etc could be modified as required from ADF Json configuration. From Azure Data Factory pipeline versions are directly checked into GitHub for version management. Created an ADF pipeline (Source code checked in Github) which was used to extract data into blob storage. This helped to understand and select best file format for data migration.

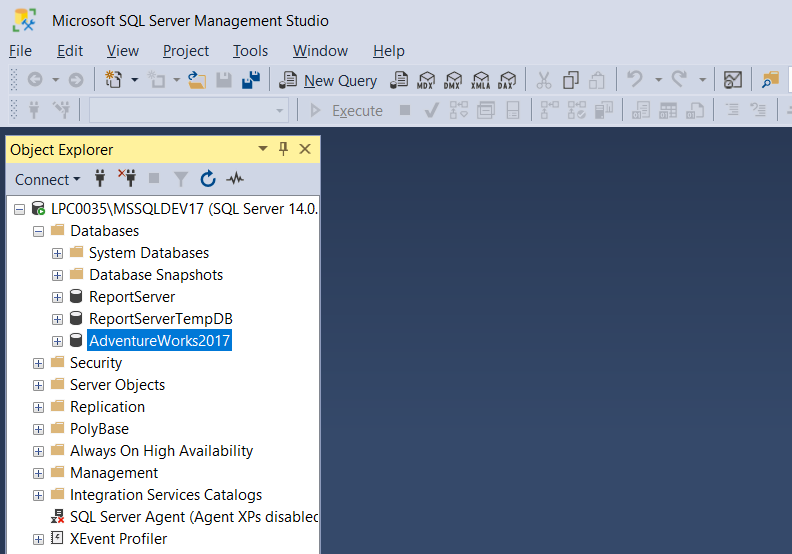

While data could be exported into various formats such as Parquet, Avro, ORC and Json, I choose csv + gzip by looking at Snowflake load performance figures. Gzip compression technique is used to occupy less storage space. Generic data format of csv with pipe delimited (|) and Text qualifier (“) is used. Step1: Using Azure Data Factory connect to SQL Database and upload table data into blob storage. Design for the migration is three step approach. This article explains the Copy into from blob storage approach to migrate data from SQL Server into Snowflake. While searching for How to Migrate large volumes of data from Snowflake forums (need login), I found that using Copy into is the best way to load data either from AWS S3 / Azure Blob storage.This is not performant way to load data into Snowflake. ODBC linked server setup guide is available (“snowflake linked server” keywords away from google). Setup Snowflake as a linked server within SQL Server (For Azure SQL DB directly this is not possible) and insert records from SQL to Snowflake.Generally, there are two approaches to perform database migration, Same framework could also be use to migrate any database from SQL Server into Snowflake using Azure Data Factory and Azure Blob Storage.ĭata migration from SQL Database to Snowflake is not as easy as to backup and restore. In this article an attempt has been made to build a generic framework to migrate Adventureworks/DW database. But an excellent choice for Analytics workload.

While Snowflake is not a best choice for OLTP applications. Ideally organization would prefer to migrate their own database to Snowflake for a cost/performance PoC. Migration of AdventureWorks/DW from Azure SQL DB into Snowflakeįor all who wants to make a cost and performance comparison between Azure SQL DB Vs Snowflake, Adventureworks and AdventureworksDW are excellent database which could be utilized as a reference between both platform.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed